What It Does

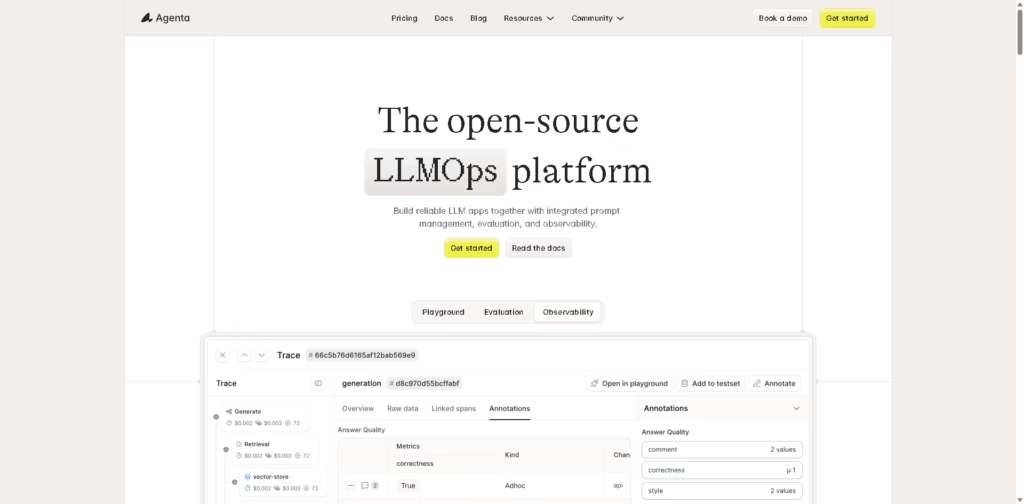

Agenta is an open-source LLMOps platform that helps teams build, test, evaluate, and monitor AI applications (like chatbots and AI agents) in one place.

It brings structure to messy workflows by combining prompt management, experimentation, and observability into a single, collaborative system.

Key Features

- Unified Playground: Compare prompts and models side-by-side to find what works best.

- Prompt Versioning: Track every change with a complete version history.

- Automated Evaluations: Run structured tests to measure performance and validate improvements.

- Full Trace Analysis: Inspect every step of an AI response, not just the final output.

- Observability & Debugging: Track requests, detect errors, and pinpoint failure points easily.

- Human Feedback Integration: Add input from domain experts directly into evaluations.

- Collaboration Tools: Bring developers, product managers, and experts into one workflow.

- Model-Agnostic: Works with any LLM or framework-no vendor lock-in.

- Open-Source Flexibility: Fully customizable and transparent for developers.

Who Is Agenta For?

- AI Developers & Engineers: Build and debug reliable AI applications faster.

- Product Managers: Track experiments and ensure continuous improvement.

- Data & Domain Experts: Contribute insights without needing coding skills.

- Startups & AI Teams: Create structured workflows for scalable AI development.

Final Thoughts

Agenta solves a common pain point in AI development: chaotic workflows and a lack of visibility. Instead of juggling tools and guessing what works, it gives teams a clear, structured system to experiment, evaluate, and improve AI continuously.

If you’re building serious AI products and want better control and collaboration, Agenta is a solid open-source option to explore.